Synthesizer

Background to the schools Wikipedia

SOS Children, which runs nearly 200 sos schools in the developing world, organised this selection. Visit the SOS Children website at http://www.soschildren.org/

A sound synthesiser (often abbreviated as "synthesiser" or "synth") is an electronic instrument capable of producing a wide range of sounds. Synthesisers may either imitate other instruments ("imitative synthesis") or generate new timbres. They can be played (controlled) via a variety of different input devices (including keyboards, music sequencers and instrument controllers). Synthesisers generate electric signals (waveforms), and can finally be converted to sound through the loudspeakers or headphones.

Synthesisers use a number of different technologies or programmed algorithms to generate signal, each with their own strengths and weaknesses. Among the most popular waveform synthesis techniques are subtractive synthesis, additive synthesis, wavetable synthesis, frequency modulation synthesis, phase distortion synthesis, physical modeling synthesis and sample-based synthesis. Also other sound synthesis methods including subharmonic synthesis used on mixture trautonium, granular synthesis resulting Soundscape or Cloud, are rarely used. (See #Types of synthesis)

Synthesizers are often controlled with a piano-style keyboard. Other forms of controllers resemble fingerboards, guitars ( guitar synthesizer), violins, wind instruments ( wind controller), drums and percussions ( electronic drum), etc. (See #Control interfaces) Synthesizers without built-in controllers are often called sound modules, and are controlled via MIDI or CV/Gate methods.

History

The beginnings of the synthesizer are difficult to trace as there is often confusion between sound synthesizers and arbitrary electric/ electronic musical instruments.

- Early electric instruments

For example, one of the earliest electric musical instruments was invented in 1876 by American electrical engineer Elisha Gray, who accidentally discovered that he could control sound from a self-vibrating electromagnetic circuit. In doing so, he invented a basic single note oscillator. This musical telegraph used steel reeds, whose oscillations were created and transmitted over a telegraphy line by electromagnets. Gray also built a simple loudspeaker device into later models, consisting of a vibrating diaphragm in a magnetic field, to make the oscillator audible.

This instrument was a remote electromechanical musical instrument using telegraphy and electric buzzers. Though it had no sound synthesis function, some have erroneously called it the first synthesizer.

- Early additive synthesizer – Tonewheel organs

In 1897, Thaddeus Cahill invented Teleharmonium (or Dynamophone) utilizing dynamo (early electric generator), and it had the capability of additive synthesis also seen on Hammond organ later invented in 1934. However, Cahill's business was not successful due to various reasons (ex. too huge scale of system, rapid evolutions of electronics, crosstalk issues on the telephone line, etc), and similar but more compact instruments were developed one after another.

- Emergence of electronics – Theremin (1920), Ondes Martenot (1928), and Trautonium (1929)

In 1906, a huge revolution of electronics had begun.

American engineer Lee De Forest invented the world first amplifying vacuum tube, called the Audion tube. This led to new technologies, including radio and sound film for entertainment. These new technologies also influenced the music industry, and resulted in various early electronic musical instruments that used vacuum tubes, including:

- Audion piano by Lee De Forest in 1915

- Theremin by Léon Theremin in 1920

- Ondes Martenot by Maurice Martenot in 1928

- Trautonium by Friedrich Trautwein in 1929

etc. The most of these early instruments utilized " heterodyne circuit" to produce audio frequency, and these sound synthesis capabilities were initially limited, however, along with the development over a decade, these instruments finally won the enough expression ability.

- Graphical sound

Also in 1920s, Arseny Avraamov developed various systems of graphic sonic art, and similar graphical sound systems were also developed around the world, one after another. In 1938, USSR engineer Yevgeny Murzin invented a design for a music-composition tool called ANS, one of the earliest conceptions of a real-time additive synthesizer using optoelectronics. Although his idea of reconstructing a sound from its visible image was apparently simple, it was only realized 20 years later, in 1958, because his professional field was not related to music.

- Subtractive synthesis & polyphonic synthesizer

In the 1930s and 1940s, the basic elements required for the modern analog subtractive synthesizers — audio oscillators, audio filters, envelope controllers, and various effects units — had already appeared and were utilized on several electronic instruments. And even the earliest polyphonic synthesizers went into commercial production in Germany and the United States. The Warbo Formant Organ developed by Harald Bode in Germany in 1937, was a four-voice key-assignment keyboard with two formant filters and a dynamic envelope controller, it eventually went into commercial production by a factory in Dachau. The Hammond Novachord released in 1939, was an electronic keyboard that used a frequency-divider for sound generation, with vibratos, filter, resonator-network and a dynamic envelope controller. During the three years that Hammond manufactured this model they shipped 1,069 units, but discontinued production at the start of World War II. Both instruments were the forerunners of the following electronic organs and the later polyphonic synthesizers.

- Monophonic electronic keyboards – Hammond Solovox (1940), Ondiolin (1941), Clavioline (1947), Clavivox (1952)

Georges Jenny built his first ondioline in France in 1941.

- Other innovations

In the late 1940s, Canadian inventor and composer, Hugh Le Caine invented Electronic Sackbut, which provided the earliest realtime control of three aspects of sound (volume, pitch and timbre), corresponding to today's touch-sensitive keyboard, pitch & modulation controllers, etc. The controllers were initially implemented as the multidimensional pressure keyboard in 1945, then changed to a group of dedicated controllers operated by left hand in 1948.

Also in Japan, as early as in 1935, Yamaha developed Magna organ, their first synthesizer based on Keyboard theremin. However, in 1949, Japanese composer Minao Shibata discussed the concept of "a musical instrument with very high performance" that can "synthesize any kind of sound waves" and is "...operated very easily," predicting that with such an instrument, "...the music scene will be changed drastically."

- Electronic music studios as "sound synthesizer"

After World War II, electronic music including electroacoustic music and musique concrète was created by contemporary composers, and numerous electronic music studios were established around the world, especially in Bonn, Cologne, Paris and Milan. These studios were typically filled with electronic equipment including oscillators, filters, tape recorders, audio consoles, etc., and the whole studio functioned as a "sound synthesizer".

- Origin of the term "sound synthesizer"

In 1951–1952, RCA produced a machine called the Electronic Music Synthesizer; however, it was more accurately a composition machine, because it did not produce sounds in real time. Then, RCA developed the first programmable sound synthesizer, RCA Mark II Sound Synthesizer, and installed it to Columbia-Princeton Electronic Music Centre in 1957. Prominent composers including Vladimir Ussachevsky, Otto Luening, Milton Babbitt, Halim El-Dabh, Bülent Arel, Charles Wuorinen, and Mario Davidovsky used the RCA Synthesizer extensively in various compositions.

Modular synthesizer

In 1959–1960, Harald Bode developed a modular synthesizer and sound processor, and in 1961, he wrote a paper exploring the concept of self-contained portable modular synthesizer using newly emerging transistor technology; also he served as AES session chairman on music and electronic for the fall conventions in 1962 and 1964; after then, his ideas were adopted by Donald Buchla, Robert Moog, and others.

Robert Moog released the first commercially available modern synthesizer in 1965. In the late 1960s to 1970s, the development of miniaturized solid-state components allowed synthesizers to become self-contained, portable instruments, as proposed by Harald Bode in 1961. By the early 1980s companies were selling compact, modestly priced synthesizers to the public. This, along with the development of Musical Instrument Digital Interface (MIDI), made it easier to integrate and synchronize synthesizers and other electronic instruments for use in musical composition. In the 1990s, synthesizer emulations began to appear in computer software, known as software synthesizers. Later, VST and other plugins were able to emulate classic hardware synthesizers to a moderate degree.

|

Wendy Carlos - Switched-On Bach (1968)

First Movement (Allegro) of Brandenburg Concerto Number 3 played on synthesizer.

|

| Problems listening to this file? See media help. | |

The synthesizer had a considerable effect on 20th century music. Micky Dolenz of The Monkees bought one of the first Moog synthesizers. The band was the first to release an album featuring a Moog with Pisces, Aquarius, Capricorn & Jones Ltd. in 1967. It reached #1 in the charts. A few months later, both the Rolling Stones' " 2000 Light Years from Home" and the title track of the Doors' 1967 album Strange Days also featured a Moog, played by Brian Jones and Paul Beaver respectively. Wendy Carlos's Switched-On Bach (1968), recorded using Moog synthesizers, also influenced numerous musicians of that era and is one of the most popular recordings of classical music ever made, alongside the records of Isao Tomita (particularly Snowflakes are Dancing in 1974), who in the early 1970s utilized synthesizers to create new artificial sounds (rather than simply mimicking real instruments) and made significant advances in analog synthesizer programming.

The sound of the Moog also reached the mass market with Simon and Garfunkel's Bookends in 1968 and The Beatles' Abbey Road the following year; hundreds of other popular recordings subsequently used synthesizers. Electronic music albums by Beaver and Krause, Tonto's Expanding Head Band, The United States of America, and White Noise reached a sizable cult audience and progressive rock musicians such as Richard Wright of Pink Floyd and Rick Wakeman of Yes were soon using the new portable synthesizers extensively. Other early users included Emerson, Lake & Palmer's Keith Emerson, Pete Townshend, and The Crazy World of Arthur Brown's Vincent Crane. The Perrey and Kingsley album The In Sound From Way Out! using the Moog and tape loops plus other was released in 1966. The first UK no 1 single to feature a Moog prominently was Chicory Tip's 1972 hit Son of My Father.

Synthpop

Since the early or mid-1970s, Jean Michel Jarre, Larry Fast, and Vangelis released successful electronic instrumental albums. The emergence of Synthpop, a sub-genre of New Wave, in the late 1970s can be largely credited to synthesizer technology. The ground-breaking work of all-electronic German bands such as Kraftwerk and Tangerine Dream, David Bowie during his Berlin period (1976–1977), as well as the pioneering work of the Japanese Yellow Magic Orchestra and British Gary Numan, were crucial in the development of the genre.

English musician Gary Numan's 1979 hits " Are 'Friends' Electric?" and " Cars" used synthesizers heavily. OMD's " Enola Gay" (1980) used a distinctive electronic percussion and synthesized melody. Soft Cell used a synthesized melody on their 1981 hit " Tainted Love". Nick Rhodes, keyboardist of Duran Duran, used various synthesizers including slightly minor Roland Jupiter-4 and Jupiter-8.

Other chart hits include Depeche Mode's " Just Can't Get Enough" (1981), The Human League's " Don't You Want Me". and Giorgio Moroder's " Flashdance... What a Feeling" (1983) for Irene Cara. Other notable synthpop groups included New Order, Visage, Japan, Ultravox, Spandau Ballet, Culture Club, Eurythmics, Yazoo, Thompson Twins, A Flock of Seagulls, Erasure, Blancmange, Kajagoogoo, Devo, and the early work of Tears for Fears. Other notable users include Giorgio Moroder, Howard Jones, Kitaro, Stevie Wonder, Peter Gabriel, Thomas Dolby, Kate Bush, and Frank Zappa.

The synthesizer then became one of the most important instruments in the music industry.

Types of synthesis

Additive synthesis builds sounds by adding together waveforms (which are usually harmonically related). An early analog example of an additive synthesizer is the Teleharmonium and Hammond organ. To implement real-time additive synthesis, wavetable synthesis is useful for reducing required hardware/processing power, and is commonly used in low-end MIDI instruments (such as educational keyboards) and low-end sound cards.

Subtractive synthesis is based on filtering harmonically rich waveforms. Due to its simplicity, it is the basis of early synthesizers such as the Moog synthesizer. Subtractive synthesizers use a simple acoustic model that assumes an instrument can be approximated by a simple signal generator (producing sawtooth waves, square waves, etc.) followed by a filter. The combination of simple modulation routings (such as pulse width modulation and oscillator sync), along with the physically unrealistic lowpass filters, is responsible for the "classic synthesizer" sound commonly associated with "analog synthesis" and often mistakenly used when referring to software synthesizers using subtractive synthesis.

FM synthesis (frequency modulation synthesis) is a process that usually involves the use of at least two signal generators (sine-wave oscillators, commonly referred to as "operators" in FM-only synthesizers) to create and modify a voice. Often, this is done through the analog or digital generation of a signal that modulates the tonal and amplitude characteristics of a base carrier signal. FM synthesis was pioneered by John Chowning, who patented the idea and sold it to Yamaha. Unlike the exponential relationship between voltage-in-to-frequency-out and multiple waveforms in classical 1-volt-per-octave synthesizer oscillators, Chowning-style FM synthesis uses a linear voltage-in-to-frequency-out relationship and sine-wave oscillators. The resulting complex waveform may have many component frequencies, and there is no requirement that they all bear a harmonic relationship. Sophisticated FM synths such as the Yamaha DX-7 series can have 6 operators per voice; some synths with FM can also often use filters and variable amplifier types to alter the signal's characteristics into a sonic voice that either roughly imitates acoustic instruments or creates sounds that are unique. FM synthesis is especially valuable for metallic or clangorous noises such as bells, cymbals, or other percussion.

Phase distortion synthesis is a method implemented on Casio CZ synthesizers. It is quite similar to FM synthesis but avoids infringing on the Chowning FM patent. Also it should be categorized to modulation synthesis along with FM synthesis, and also to distortion synthesis along with waveshaping synthesis, and discrete summation formulas.

Granular synthesis is a type of synthesis based on manipulating very small sample slices.

Physical modelling synthesis is the synthesis of sound by using a set of equations and algorithms to simulate a real instrument, or some other physical source of sound. This involves taking up models of components of musical objects and creating systems that define action, filters, envelopes and other parameters over time. The definition of such instruments is virtually limitless, as one can combine any given models available with any amount of sources of modulation in terms of pitch, frequency and contour. For example, the model of a violin with characteristics of a pedal steel guitar and perhaps the action of piano hammer. When an initial set of parameters is run through the physical simulation, the simulated sound is generated. Although physical modeling was not a new concept in acoustics and synthesis, it was not until the development of the Karplus-Strong algorithm and the increase in DSP power in the late 1980s that commercial implementations became feasible. Physical modeling on computers gets better and faster with higher processing.

Sample-based synthesis One of the easiest synthesis systems is to record a real instrument as a digitized waveform, and then play back its recordings at different speeds to produce different tones. This is the technique used in "sampling". Most samplers designate a part of the sample for each component of the ADSR envelope, and then repeat that section while changing the volume for that segment of the envelope. This lets the sampler have a persuasively different envelope using the same note. See also Wavetable synthesis, Vector synthesis, etc.

Analysis/resynthesis is a form of synthesis that uses a series of bandpass filters or Fourier transforms to analyze the harmonic content of a sound. The resulting analysis data is then used in a second stage to resynthesize the sound using a band of oscillators. The vocoder, linear predictive coding, and some forms of speech synthesis are based on analysis/resynthesis.

Imitative synthesis

Sound synthesis can be used to mimic acoustic sound sources. Generally, a sound that does not change over time includes a fundamental partial or harmonic, and any number of partials. Synthesis may attempt to mimic the amplitude and pitch of the partials in an acoustic sound source.

When natural sounds are analyzed in the frequency domain (as on a spectrum analyzer), the spectra of their sounds exhibits amplitude spikes at each of the fundamental tone's harmonics corresponding to resonant properties of the instruments (spectral peaks that are also referred to as formants). Some harmonics may have higher amplitudes than others. The specific set of harmonic-vs-amplitude pairs is known as a sound's harmonic content. A synthesized sound requires accurate reproduction of the original sound in both the frequency domain and the time domain. A sound does not necessarily have the same harmonic content throughout the duration of the sound. Typically, high-frequency harmonics die out more quickly than the lower harmonics.

In most conventional synthesizers, for purposes of re-synthesis, recordings of real instruments are composed of several components representing the acoustic responses of different parts of the instrument, the sounds produced by the instrument during different parts of a performance, or the behaviour of the instrument under different playing conditions (pitch, intensity of playing, fingering, etc.)

Components

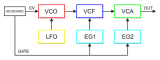

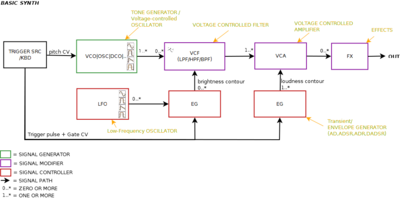

Synthesizers generate sound through various analogue and digital techniques. Early synthesizers were analog hardware based but many modern synthesizers use a combination of DSP software and hardware or else are purely software-based (see softsynth). Digital synthesizers often emulate classic analog designs. Sound is controllable by the operator by means of circuits or virtual stages that may include:

- Electronic oscillators – create raw sounds with a timbre that depends upon the waveform generated. Voltage-controlled oscillators (VCOs) and digital oscillators may be used. Harmonic Additive synthesis models sounds directly from pure sine waves, somewhat in the manner of an organ, while Frequency modulation and Phase distortion synthesis use one oscillator to modulate another. Subtractive synthesis depends upon filtering a harmonically rich oscillator waveform. Sample-based and Granular synthesis use one or more digitally recorded sounds in place of an oscillator.

- Voltage-controlled filter (VCF) – "shape" the sound generated by the oscillators in the frequency domain, often under the control of an envelope or LFO. These are essential to subtractive synthesis.

- Voltage-controlled amplifier (VCA) – After the signal generated by one (or a mix of more Voltage-controlled oscillators), modified by filters and LFOs, and the signal's waveform is shaped (contoured) by an ADSR Envelope Generator, it then passes on to one or more voltage-controlled amplifiers (VCA) where. The VCA is a preamp that boosts (amplifies) the electronic signal before passing on to an external or built-in power amplifier, as well as a means to control its volume using an attenuator that affects a control voltage (coming from the keyboard or other trigger source), which affects the gain of the VCA.

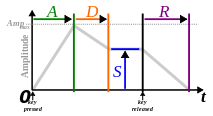

- ADSR envelopes - provide envelope modulation to "shape" the volume or harmonic content of the produced note in the time domain with the principle parameters being attack, decay, sustain and release. These are used in most forms of synthesis. ADSR control is provided by Envelope Generators.

- Low frequency oscillator (LFO) – an oscillator of adjustable frequency that can be used to modulate the sound rhythmically, for example to create tremolo or vibrato or to control a filter's operating frequency. LFOs are used in most forms of synthesis.

- Other sound processing effects such as ring modulators may be encountered.

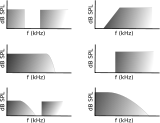

Filter

Electronic filters are particularly important in subtractive synthesis, being designed to pass some frequency regions through unattenuated while significantly attenuating ("subtracting") others. The low-pass filter is most frequently used, but band-pass filters, band-reject filters and high-pass filters are also sometimes available.

The filter may be controlled with a second ADSR envelope. An "envelope modulation" ("env mod") parameter on many synthesizers with filter envelopes determines how much the envelope affects the filter. If turned all the way down, the filter producs a flat sound with no envelope. When turned up the envelope becomes more noticeable, expanding the minimum and maximum range of the filter.

ADSR envelope

| Key | on | off | |||

When an acoustic musical instrument produces sound, the loudness and spectral content of the sound change over time in ways that vary from instrument to instrument. The "attack" and "decay" of a sound have a great effect on the instrument's sonic character. Sound synthesis techniques often employ an envelope generator that controls a sound's parameters at any point in its duration. Most often this is an "ADSR" (Attack Decay Sustain Release) envelope, which may be applied to overall amplitude control, filter frequency, etc. The envelope may be a discrete circuit or module, or implemented in software. The contour of an ADSR envelope is specified using four parameters:

- Attack time is the time taken for initial run-up of level from nil to peak, beginning when the key is first pressed.

- Decay time is the time taken for the subsequent run down from the attack level to the designated sustain level.

- Sustain level is the level during the main sequence of the sound's duration, until the key is released.

- Release time is the time taken for the level to decay from the sustain level to zero after the key is released.

An early implementation of ADSR can be found on the Hammond Novachord in 1938 (which predates the first Moog synthesizer by over 25 years). A seven-position rotary knob set preset ADS parameter for all 72 notes; a footpedal controlled release time. The notion of ADSR was specified by Vladimir Ussachevsky (then head of the Columbia-Princeton Electronic Music Centre) in 1965 while suggesting improvements for Bob Moog's pioneering work on synthesizers, although the earlier notations of parameter were (T1, T2, Esus, T3), then these were simplified to current form (Attack time, Decay time, Sustain level, Release time) by ARP.

Some electronic musical instruments allow the ADSR envelope to be inverted, which results in opposite behaviour compared to the normal ADSR envelope. During the attack phase, the modulated sound parameter fades from the maximum amplitude to zero then, during the decay phase, rises to the value specified by the sustain parameter. After the key has been released the sound parameter rises from sustain amplitude back to maximum amplitude.

A common variation of the ADSR on some synthesizers, such as the Korg MS-20, was ADSHR (attack, decay, sustain, hold, release). By adding a "hold" parameter, the system allowed notes to be held at the sustain level for a fixed length of time before decaying. The General Instruments AY-3-8912 sound chip included a hold time parameter only; the sustain level was not programmable. Another common variation in the same vein is the AHDSR (attack, hold, decay, sustain, release) envelope, in which the "hold" parameter controls how long the envelope stays at full volume before entering the decay phase. Multiple attack, decay and release settings may be found on more sophisticated models.

Certain synthesizers also allow for a delay parameter before the attack. Modern synthesizers like the Dave Smith Instruments Prophet '08 have DADSR (delay, attack, decay, sustain, release) envelopes. The delay setting determines the length of silence between hitting a note and the attack. Some software synthesizers, such as Image-Line's 3xOSC (included for free with their DAW FL Studio) have DAHDSR (delay, attack, hold, decay, sustain, release) envelopes.

LFO

A low-frequency oscillator (LFO) generates an electronic signal, usually below 20 Hz. LFO signals create a rhythmic pulse or sweep, often used to in vibrato, tremolo and other effects. In certain genres of electronic music, the LFO signal can control the cutoff frequency of a VCF to make a rhythmic wah-wah sound, or the signature dubstep wobble bass.

Patch

A synthesizer patch (some manufacturers chose the term program) is a sound setting. Modular synthesizers used cables (" patch cords") to connect the different sound modules together. Since these machines had no memory to save settings, musicians wrote down the locations of the patch cables and knob positions on a "patch sheet" (which usually showed a diagram of the synthesizer). Ever since, an overall sound setting for any type of synthesizer has been known as a patch.

In mid–late 1970s, patch memory (allowing storage and loading of 'patches' or 'programs') began to appear in synths like the Oberheim Four-voice (1975/1976) and Sequential Circuits Prophet-5 (1977/1978). After MIDI was introduced in 1983, more and more synthesizers could import or export patches via MIDI SYSEX commands. When a synthesizer patch is uploaded to a personal computer that has patch editing software installed, the user can alter the parameters of the patch and download it back to the synthesizer. Because there is no standard patch language it is rare that a patch generated on one synthesizer can be used on a different model. However sometimes manufacturers design a family of synthesizers to be compatible.

Control interfaces

Modern synthesizers often look like small pianos, though with many additional knob and button controls. These are integrated controllers, where the sound synthesis electronics are integrated into the same package as the controller. However many early synthesizers were modular and keyboardless, while most modern synthesizers may be controlled via MIDI, allowing other means of playing such as;

- Fingerboards (ribbon controllers) and touchpads

- Wind controllers

- Guitar-style interfaces

- Drum pads

- Music sequencers

- Non-contact interfaces akin to theremins

- Tangible interfaces like a Reactable, AudioCubes

- Various auxiliary input device including: wheels for pitch bend and modulation, footpedals for expression and sustain, breath controllers, beam controllers, etc.

Fingerboard controller

A ribbon controller or other violin-like user interface may be used to control synthesizer parameters. The concept dates to Léon Theremin's 1922 first conceive and his 1932 Fingerboard Theremin and Keyboard Theremin, Maurice Martenot's 1928 Ondes Martenot (sliding a metal ring), Friedrich Trautwein's 1929 Trautonium (finger pressure), and also later utilized by Robert Moog. The ribbon controller has no moving parts. Instead, a finger pressed down and moved along it creates an electrical contact at some point along a pair of thin, flexible longitudinal strips whose electric potential varies from one end to the other. Older fingerboards used a long wire pressed to a resistive plate. A ribbon controller is similar to a touchpad, but a ribbon controller only registers linear motion. Although it may be used to operate any parameter that is affected by control voltages, a ribbon controller is most commonly associated with pitch bending.

Fingerboard-controlled instruments include the Trautonium (1929), Hellertion (1929) and Heliophon (1936), Electro-Theremin (Tannerin, late 1950s), Perspehone (2004), and the Swarmatron (2004). A ribbon controller is used as an additional controller in the Yamaha CS-80 and CS-60, the Korg Prophecy and Korg Trinity series, the Kurzweil synthesizers, Moog synthesizers, and others.

Rock musician Keith Emerson used it with the Moog modular synthesizer from 1970 onward. In the late 1980s, keyboards in the synth lab at Berklee College of Music were equipped with membrane thin ribbon style controllers that output MIDI. They functioned as MIDI managers, with their programming language printed on their surface, and as expression/performance tools. Designed by Jeff Tripp of Perfect Fretworks Co., they were known as Tripp Strips. Such ribbon controllers can serve as a main MIDI controller instead of a keyboard, as with the Continuum instrument.

Wind controllers

Wind controllers (and wind synthesizers) are convenient for woodwind and brass players, being designed after the lines of those instruments. These are usually either analog or MIDI controllers, and sometimes include their own built-in sound modules (synthesizers). In addition to the follow of key arrangements and fingering, the controllers have breath-operated pressure transducers, and may have gate extractors, velocity sensors, and bite sensors. Saxophone style controllers have included the Lyricon, and products by Yamaha, Akai, and Casio. The mouthpieces range from alto clarinet to alto saxophone sizes. Also bassoon style controller was released from Eigenlabs (2009). Melodica or recorder style controllers have included the Martinetta (1975) and Variophon (1980), Tubophon, and Joseph Zawinul's custom Korg Pepe. A Harmonica style interface was the Millionizer 2000 (c.1983).

Trumpet style controllers have included products by Steiner/ Crumar/ Akai, Yamaha, and Morrison. Also breath controllers may be used as an adjunct to a conventional synthesizer: the Crumar Steiner Masters Touch, Yamaha Breath Controller and its compatible products are the examples. Several controllers also provide breath-like articulation capabilities.

Accordion controllers use pressure transducers on bellows for articulation.

Others

The other controllers include: Theremin, lightbeam controllers, touch buttons (touche d’intensité) on the Ondes Martenot, and various type of footpedals, etc. Envelope following systems, the most sophiscated being the vocoder, follow the power or amplitude of input audio signal, instead of breath pressure on the wind controllers. More direct articulation, the vocal tract without breath, is utilized on the Talk box, although it is rarely categorized as a synthesizer.

MIDI control

Synthesizers became easier to integrate and synchronize with other electronic instruments and controllers with the introduction of Musical Instrument Digital Interface (MIDI) in 1983. First proposed in 1981 by engineer Dave Smith of Sequential Circuits, the MIDI standard was developed by a consortium now known as the MIDI Manufacturers Association. MIDI is an opto-isolated serial interface and communication protocol. It provides for the transmission from one device or instrument to another of real-time performance data. This data includes note events, commands for the selection of instrument presets (i.e. sounds, or programs or patches, previously stored in the instrument's memory), the control of performance-related parameters such as volume, effects levels and the like, as well as synchronization, transport control and other types of data. MIDI interfaces are now almost ubiquitous on music equipment and are commonly available on personal computers (PCs).

The General MIDI (GM) software standard was devised in 1991 to serve as a consistent way of describing a set of over 200 tones (including percussion) available to a PC for playback of musical scores. For the first time, a given MIDI preset consistently produced a specific instrumental sound on any GM-conforming device. The Standard MIDI File (SMF) format ( extension .mid) combined MIDI events with delta times - a form of time-stamping - and became a popular standard for exchange of music scores between computers. In the case of SMF playback using integrated synthesizers (as in computers and cell phones), the hardware component of the MIDI interface design is often unneeded.

Open Sound Control (OSC) is another music data specification designed for online networking. In contrast with MIDI, OSC allows thousands of synthesizers or computers to share music performance data over the Internet in realtime.

Typical roles

Synth lead

In popular music, a synth lead is generally used for playing the main melody of a song, but it is also often used for creating rhythmic or bass effects. Although most commonly heard in electronic dance music, synth leads have been used extensively in hip-hop since the 1980s and rock songs since the 1970s. Most modern music relies heavily on the synth lead to provide a musical hook to sustain the listener's interest throughout an entire song. Heavy use of synth lead is used by artists such as Lil Jon in Snap Yo Fingas and Usher in "Yeah!" as representative of the Crunk music genre.

Synth pad

A synth pad is a sustained chord or tone generated by a synthesizer, often employed for background harmony and atmosphere in much the same fashion that a string section is often used in acoustic music. Typically, a synth pad plays many whole or half notes, sometimes holding the same note while a lead voice sings or plays an entire musical phrase. Often, the sounds used for synth pads have a vaguely organ, string, or vocal timbre. Much popular music in the 1980s employed synth pads, this being the time of polyphonic synthesizers, as did the then-new styles of smooth jazz and New Age music. One of many well-known songs from the era to incorporate a synth pad is " West End Girls" by the Pet Shop Boys, who were noted users of the technique.

The main feature of a synth pad is very long attack and decay time with extended sustains. In some instances pulse-width modulation (PWM) using a square wave oscillator can be added to create a "vibrating" sound.

Synth bass

The bass synthesizer (or "bass synth") is used to create sounds in the bass range, from simulations of the electric bass or double bass to distorted, buzz-saw-like artificial bass sounds, by generating and combining signals of different frequencies. Bass synth patches may incorporate a range of sounds and tones, including wavetable-style, analog, and FM-style bass sounds, delay effects, distortion effects, envelope filters. A modern digital synthesizer uses a frequency synthesizer microprocessor component to generate signals of different frequencies. While most bass synths are controlled by electronic keyboards or pedalboards, some performers use an electric bass with MIDI pickups to trigger a bass synthesizer.

In the 1970s miniaturized solid-state components allowed self-contained, portable instruments such as the Moog Taurus, a 13-note pedal keyboard played by the feet. The Moog Taurus was used in live performances by a range of pop, rock, and blues-rock bands. An early use of bass synthesizer was in 1972, on a solo album by John Entwistle (the bassist for The Who), entitled Whistle Rymes. Genesis bass player Mike Rutherford used a Dewtron "Mister Bassman" for the recording of their album Nursery Cryme in August 1971. Stevie Wonder introduced synth bass to a pop audience in the early 1970s, notably on Superstition (1972) and Boogie On Reggae Woman (1974). In 1977 Parliament's funk single Flashlight used the bass synthesizer. Lou Reed, widely considered a pioneer of electric guitar textures, played bass synthesizer on "Families", from his 1979 album The Bells.

When the programmable music sequencer became widely available in the 1980s (e.g., the Synclavier), bass synths were used to create highly syncopated rhythms and complex, rapid basslines. Bass synth patches incorporate a range of sounds and tones, including wavetable-style, analog, and FM-style bass sounds, delay effects, distortion effects, envelope filters. A particularly influential bass synthesizer was the Roland TB-303 following Firstman SQ-01. Released in late 1981, it featured a built-in sequencer and later became strongly associated with acid house music. Charanjit Singh, in 1982, was one of the first to use it, though it didn't achieve wide popularity until Phuture's " Acid Tracks" in 1987.

In the 2000s (decade), several companies such as Boss and Akai produced bass synthesizer effect pedals for electric bass players, which simulate the sound of an analog or digital bass synth. With these devices, a bass guitar is used to generate synth bass sounds. The BOSS SYB-3 was one of the early bass synthesizer pedals. The SYB-3 reproduces sounds of analog synthesizers with Digital Signal Processing saw, square, and pulse synth waves and user-adjustable filter cutoff. The Akai bass synth pedal contains a four-oscillator synthesizer with user selectable parameters (attack, decay, envelope depth, dynamics, cutoff, resonance). Bass synthesizer software allows performers to use MIDI to integrate the bass sounds with other synthesizers or drum machines. Bass synthesizers often provide samples from vintage 1970s and 1980s bass synths. Some bass synths are built into an organ style pedalboard or button board.

Arpeggiator

An arpeggiator is a feature available on several synthesizers that automatically steps through a sequence of notes based on an input chord, thus creating an arpeggio. The notes can often be transmitted to a MIDI sequencer for recording and further editing. An arpeggiator may have controls speed, range, and order in which the notes play; upwards, downwards, or in a random order. More advanced arpeggiators allow the user to step through a pre-programmed complex sequence of notes, or play several arpeggios at once. Some allow a pattern sustained after releasing keys: in this way, sequence of arpeggio patterns may be built up over time by pressing several keys one after the other. Arpeggiators are also commonly found in software sequencers. Some arpeggiators/sequencers expand features into a full phrase sequencer, which allows the user to trigger complex, multi-track blocks of sequenced data from a keyboard or input device, typically synchronized with the tempo of the master clock.

|

Sound sample of arpeggiator

A sample of Eurodance synthesizer riff with use of rapid 1/16 notes arpeggiator

|

| Problems listening to this file? See media help. | |

Arpeggiators seem to have grown from the accompaniment system of Electronic organs in mid-1960s-mid-1970s, and possibly hardware sequencers of the mid-1960s, such as the 8/16 step analog sequencer on modular synthesizers (Buchla Series 100 (1964/1966)). And it were commonly fitted to keyboard instruments through the late 1970s and early 1980s. Notable examples are the RMI Harmonic Synthesizer (1974), Roland Jupiter 8, Oberheim OB-8, Roland SH-101, Sequential Circuits Six-Trak and Korg Polysix. A famous example can be heard on Duran Duran's song Rio, in which the arpeggiator on a Roland Jupiter-4 is heard playing a C minor chord in random mode. They fell out of favour by the latter part of the 1980s and early 1990s and were absent from the most popular synthesizers of the period but a resurgence of interest in analog synthesizers during the 1990s, and the use of rapid-fire arpeggios in several popular dance hits, brought with it a resurgence.