Probability space

Background to the schools Wikipedia

This Schools selection was originally chosen by SOS Children for schools in the developing world without internet access. It is available as a intranet download. See http://www.soschildren.org/sponsor-a-child to find out about child sponsorship.

The definition of the probability space is the foundation of probability theory. It was introduced by Kolmogorov in the 1930s. For an algebraic alternative to Kolmogorov's approach, see algebra of random variables.

Definition

A probability space  is a measure space with a measure P that satisfies the probability axioms.

is a measure space with a measure P that satisfies the probability axioms.

The sample space  is a nonempty set whose elements are known as outcomes or states of nature and are often given the symbol

is a nonempty set whose elements are known as outcomes or states of nature and are often given the symbol  The set of all the possible outcomes of an experiment is known as the sample space of the experiment.

The set of all the possible outcomes of an experiment is known as the sample space of the experiment.

Events

The second item,  , is a σ-algebra of subsets of

, is a σ-algebra of subsets of  . Its elements are called events, which are sets of outcomes for which one can ask a probability.

. Its elements are called events, which are sets of outcomes for which one can ask a probability.

Because  is a σ-algebra, it contains

is a σ-algebra, it contains  ; also, the complement of any event is an event, and the union of any (finite or countably infinite) sequence of events is an event.

; also, the complement of any event is an event, and the union of any (finite or countably infinite) sequence of events is an event.

Usually, the events are the Lebesgue-measurable or Borel-measurable sets of real numbers.

Probability measure

The probability measure  is a function from

is a function from  to the real numbers that assigns to each event a probability between 0 and 1. It must satisfy the probability axioms.

to the real numbers that assigns to each event a probability between 0 and 1. It must satisfy the probability axioms.

Because  is a function defined on

is a function defined on  and not on

and not on  , the set of events is not required to be the complete power set of the sample space; that is, not every set of outcomes is necessarily an event.

, the set of events is not required to be the complete power set of the sample space; that is, not every set of outcomes is necessarily an event.

When more than one measure is under discussion, probability measures are often written in blackboard bold to distinguish them. When there is only one probability measure under discussion, it is often denoted by Pr, meaning "probability of".

Related concepts

Probability distribution

Any probability distribution defines a probability measure.

Random variables

A random variable X is a measurable function from the sample space  ; to another measurable space called the state space.

; to another measurable space called the state space.

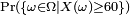

If X is a real-valued random variable, then the notation  is shorthand for

is shorthand for  , assuming that

, assuming that  is an event.

is an event.

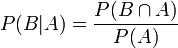

Conditional probability

Kolmogorov's definition of probability spaces gives rise to the natural concept of conditional probability. Every set  with non-zero probability (that is, P(A) > 0 ) defines another probability measure

with non-zero probability (that is, P(A) > 0 ) defines another probability measure

on the space. This is usually read as the "probability of B given A".

Independence

Two events, A and B are said to be independent if P(A∩B)=P(A)P(B).

Two random variables, X and Y, are said to be independent if any event defined in terms of X is independent of any event defined in terms of Y. Formally, they generate independent σ-algebras, where two σ-algebras G and H, which are subsets of F are said to be independent if any element of G is independent of any element of H.

The concept of independence is where probability theory departs from measure theory.

Mutual exclusivity

Two events, A and B are said to be mutually exclusive or disjoint if P(A∩B)=0. (This is weaker than A∩B=∅, which is the definition of disjoint for sets).

If A and B are disjoint events, then P(A∪B)=P(A)+P(B). This extends to a (finite or countably infinite) sequence of events. However, the probability of the union of an uncountable set of events is not the sum of their probabilities. For example, if Z is a normally distributed random variable, then P(Z=x) is 0 for any x, but P(Z is real)=1.

The event A∩B is referred to as A AND B, and the event A∪B as A OR B.

Examples

First example

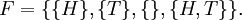

If the space concerns one flip of a fair coin, then the outcomes are heads and tails:

The events are

- {H}: heads,

- {T}: tails,

- {}: neither heads nor tails, and

- {H,T}: heads or tails.

So,

There is a fifty percent chance of tossing either heads or tail: P({H}) = P({T}) = 0.5. The chance of tossing neither is zero: P({})=0, and the chance of tossing one or the other is one: P({H,T})=1.

Second example

If 100 voters are to be drawn randomly from among all voters in California and asked whom they will vote for governor, then the set of all sequences of 100 Californian votes would be the sample space Ω.

The set of all sequences of 100 Californian voters in which at least 60 will vote for Schwarzenegger is identified with the "event" that at least 60 of the 100 chosen voters will so vote.

Then,  contains: (1) the set of all sequences of 100 where at least 60 vote for Schwarzenegger; (2) the set of all sequences of 100 where fewer than 60 vote for Schwarzenegger (the converse of (1)); (3) the sample space Ω as above; and (4) the empty set.

contains: (1) the set of all sequences of 100 where at least 60 vote for Schwarzenegger; (2) the set of all sequences of 100 where fewer than 60 vote for Schwarzenegger (the converse of (1)); (3) the sample space Ω as above; and (4) the empty set.

An example of a random variable is the number of voters who will vote for Schwarzenegger in the sample of 100.